10 december 2020

All original writing

2014, 2015, 2016,

2017, 2018, 2019,

2020, 2021, 2022,

2023, 2024

Dr Ian McLauchlin

HANNAH FRY

Hannah Fry came to Budleigh Salterton, just up the road from us as the crow flies (does it work with other birds?). She came with her radio mate Adam Rutherford, both famous for their series "The Curious Cases of Rutherford and Fry". But contrary to expectation they weren’t inseparable and Hannah appeared on her own this time. Adam waited till the day after. It was 'The Budleigh Salterton Literary Festival' and it was raining.

Daughter drove and granddaughter came along to keep me company. We were dropped at the door to the Chapel where the talk was due to take place, while daughter parked the car. Luckily they'd just opened the doors so we didn't have to queue in the rain. My first mistake of the weekend -

"Hello World -

"Hello World -

While waiting patiently for the start, I looked around at the building and its intricate stone carvings and stained glass windows. Don't ponder too much on chapels or you might find yourself disturbed by various thoughts:

- blimey, hugely expensive to build

- why not use an existing church?

- we disagree with a few details of what they believe so we're going to strut off and do our own thing, at great expense

- why is what you believe, against all reason, the cue for the construction of a building? (Actually it does work for Burnley Football Club.)

- why have a special building at all (if you’re not a football club)?

While being disturbed, I noticed that all the overtly religious symbolism at the front of the chapel had been hidden behind a black sheet. I fantasised that she would suddenly tear a hole in the sheet and appear under a spotlight, red hair blazing, to a fanfare of trumpets. Daughter saw a trapdoor in the roof and said that it was more likely she'd bungee jump down from there. I couldn't help imagining her gently touching down then rebounding roof-

After a short introduction, Hannah conventionally walked calmly from the vestry, quite  unspectacularly, with no thick rubber bands around her ankles, clutching a remote control for the slide projector and avoided tripping over the cheek mike strapped to her . . . cheek. Even though her television appearances show her walking slightly knock-

unspectacularly, with no thick rubber bands around her ankles, clutching a remote control for the slide projector and avoided tripping over the cheek mike strapped to her . . . cheek. Even though her television appearances show her walking slightly knock-

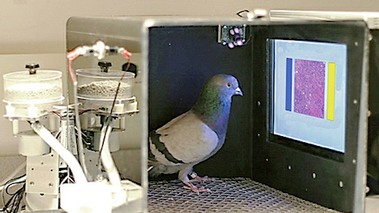

Best to grab their attention and jump straight in. Imagine 16 individuals being trained to spot breast cancer. You show them slides of biopsied tissue and they have to decide which is cancerous and which is cancer free. After a month's training their individual success rate was at least 80%. Not quite good enough. But if you showed the same images to different individuals and combined their responses, the accuracy rose to 99%! Far more reliable than a computer doing automatic image analysis. So what's noteworthy about that, I hear you say. The individuals were pigeons!

So what are algorithms? They're simply sets of instructions which operate on data which is input in order to produce a result, which is output. So a summation algorithm would take in data, say, 1 2 3 4 8 3 7 9 and output 37.

So what are algorithms? They're simply sets of instructions which operate on data which is input in order to produce a result, which is output. So a summation algorithm would take in data, say, 1 2 3 4 8 3 7 9 and output 37.

Bach wrote some very recognisable music. So here are two recordings, one of real Bach music and one of computer generated Bach-

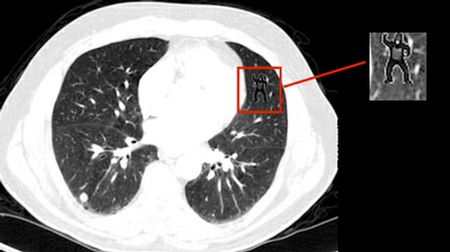

Often the search for fine detail can overlook the big picture. A group of radiographers was being assessed by their ability to discern fine detail. They examined an Xray photo for any tiny abnormality and did that with diligence and application. They studied every part of the Xray with scrupulous attention and declared that they could find no abnormality.

Unfortunately, the assessors, for fun but also to test a point, had included an image of a gorilla in the Xray. Nobody spotted it.

Algorithms can get it right and get it very wrong. People tend to think that Artificial Intelligence, powered by algorithms, is reliable and always right. A group of Japanese tourists hired a car In Australia to visit a famous tourist attraction. Their satnav showed them the shortest distance which was, of course, a straight line. What the tourists had failed to notice was that the destination was an island, and blindly followed the satnav instructions.

followed the satnav instructions.

Talking generally about AI, we can classify characteristics of the output of an algorithm. You can decide how you make use of input data to produce output of varying sensitivity (how discriminating the answers are) and how specific they are. So if you're looking at diagnosing cancer a less sensitive setting would say that 'everybody has cancer' which is clearly of no use. If you had less specific output, you might find that 'everybody was alive' -

The algorithm used in an automatic soap dispenser was unintentionally too sensitive to colour. So it would work for a white hand but not for a black one! The makers had neglected the bleedin' obvious that hands could be any colour at all while still needing soap.

In another example a camera had been programmed to check if a face was suitably ready before taking the photograph. Warning "Did somebody blink?" and the camera wouldn't take the photo till that somebody had stopped blinking. Turns out that the people who'd devised the algorithm had failed to take account of the features of far eastern people;

Yet another example of AI, and of people's general inclination to put absolute trust in it, is the 'facial recognition' algorithm. The Metropolitan Police once thought that it was a good idea to patrol the streets of London with a van stuffed full of cameras and associated computer paraphernalia and examine the faces of passing public to try and find known criminals. They had a large number of 'positives'. BUT 98% of these turned out to be false. (Angle, lighting, clothing etc. interfered.) Nevertheless, the Head of the Metropolitan Police said she was comfortable with that. Just like she was comfortable with her salary . . . .

Yet another example of AI, and of people's general inclination to put absolute trust in it, is the 'facial recognition' algorithm. The Metropolitan Police once thought that it was a good idea to patrol the streets of London with a van stuffed full of cameras and associated computer paraphernalia and examine the faces of passing public to try and find known criminals. They had a large number of 'positives'. BUT 98% of these turned out to be false. (Angle, lighting, clothing etc. interfered.) Nevertheless, the Head of the Metropolitan Police said she was comfortable with that. Just like she was comfortable with her salary . . . .

Tesco realised before any other business that data was available that would give it the edge over rivals. What data? The shopping habits of its customers, that's what. So it introduced the Tesco Club Card. Customers would think it was all about being rewarded for shopping at Tesco while Tesco KNEW that it was a way of profiling customers and their preferences. But even Tesco hadn't reckoned on family members using a single club card. One day, a wife was looking at her club card purchases and discovered an entry for a packet of condoms. Strange she thought. I would never buy those . . . She asked Tesco about it. After a hasty, sweaty and fraught conference, when the irony of that fact that Tesco knew a husband was having an affair before the wife did, they decided that discretion was needed and said that Tesco had made a mistake and wiped the offending record.

On a similar theme, some insurance company bright spark realised that there was a correlation between people who did home cooking and those who were a good insurance risk. But how to tell who was a home-

correlation between people who did home cooking and those who were a good insurance risk. But how to tell who was a home-

In another example, an algorithm promoted by a technical company was relied on totally for a period, but consistently produced strange and often glaringly bad results. On closer inspection it was found that the algorithm simply consisted of an Excel spreadsheet, poorly constructed and containing irrelevant or very poor data. Yet the user had assumed that the Company was legitimate and that the algorithm did what they said it did. Lesson? Don't believe the snake oil salesmen and don't assume that because it contains an algorithm it must be technically correct and supremely accurate in its operation.

There's currently quite some interest in driverless cars or, to be more specific, cars that drive themselves. But that concept throws up a whole new series of dilemmas, often not appreciated and still less discussed. What should the bottom line be in devising the algorithms that the system is based on? Assuming that the vehicle can detect everything around it, should it, for example, allow an older person to die in order to avoid a younger person? Or should it work on the principle that ALL collisions should be avoided? Imagine the consequences of that becoming widely known. Cyclists could bully cars. No-

The way that the Google search engine algorithms are set up is pertinent too. Hannah is a Maths Professor. When she Googles 'Professor of Mathematics', all images are male. Yes the majority are male but there are some female ones. Google, if it wanted could increase the priority of female Maths Professors in its search results. This might re-

The way that the Google search engine algorithms are set up is pertinent too. Hannah is a Maths Professor. When she Googles 'Professor of Mathematics', all images are male. Yes the majority are male but there are some female ones. Google, if it wanted could increase the priority of female Maths Professors in its search results. This might re-

If you were guilty and had the choice of being sentenced by a judge or by an algorithm which woud you choose? Opinion was divided. One answer was "An algorithm because I don't trust the judges that I know!" But many opted for a judge, possibly because he was human and, unlike algorithms, had an appreciation of context. They could be misguided. It's been found that judges can behave erratically. Sentences are harder

I don't trust the judges that I know!" But many opted for a judge, possibly because he was human and, unlike algorithms, had an appreciation of context. They could be misguided. It's been found that judges can behave erratically. Sentences are harder

1. if the judge's local team has just lost

2. if it's the wrong day of the week

3. If the offence is one against a woman and the judge has a daughter.

Algorithms can equally get it wrong and one made a sentencing decision purely on the age of the defendant. A young person whose crime was minimal and was unlikely to re-

So it's essential that an appreciation of context and commonsense are taken into account, something sometimes lacking in judges and always lacking in algorithms.

People are inclined to believe that artificial intelligence and the associated algorithms are infallible. Marketing people have noticed that and, as always, are taking advantage, resulting in some very odd advertising. For example:

Fridge is AI ready

Microwave with AI programming

Vacuum cleaner with AI bag-

Just be sceptical and bear in mind that these are marketing ploys. Hannah has found that a good test is to substitute the word MAGIC for AI. That tells you all you need to know!

Applause applause and a few questions. I wanted to know how much of her radio programme "The Curious Cases of Rutherford and Fry" was ad-

Applause applause and a few questions. I wanted to know how much of her radio programme "The Curious Cases of Rutherford and Fry" was ad-

The empty Waterstone's pantechnicon should have alerted me. There was the obligatory book signing, and most importantly purchase, afterwards.

I forced daughter at umbrella point to buy the book and, in turn, was instructed by granddaughter to take a photo. She'd been promised £1 by her dad if she had her photo taken with Hannah Fry. I tried to foul up the shot, but my amazing camera, which didn't even cry "Foul" while looking out for blinking eyes, wouldn't let me.

On the way out, I couldn’t help examining the audio-